This is a random thought experiment that pre-supposes that life has no inherent meaning - difficult to prove, but some would argue highly probable. Many books have been written on the merits of those arguments, so I won’t get into that here.

Let’s start off by defining consciousness as the ability to observe the passage of thoughts on the blank canvas of our minds.

This is a deeper level of observation than the perception of external stimuli through our five senses.

If we follow the assumption that there is no meaning to life, this means that there is no defined source of these thoughts.

Somehow through evolution, the ability for thoughts to arise beyond just the present and consider future and past states has randomly arisen.

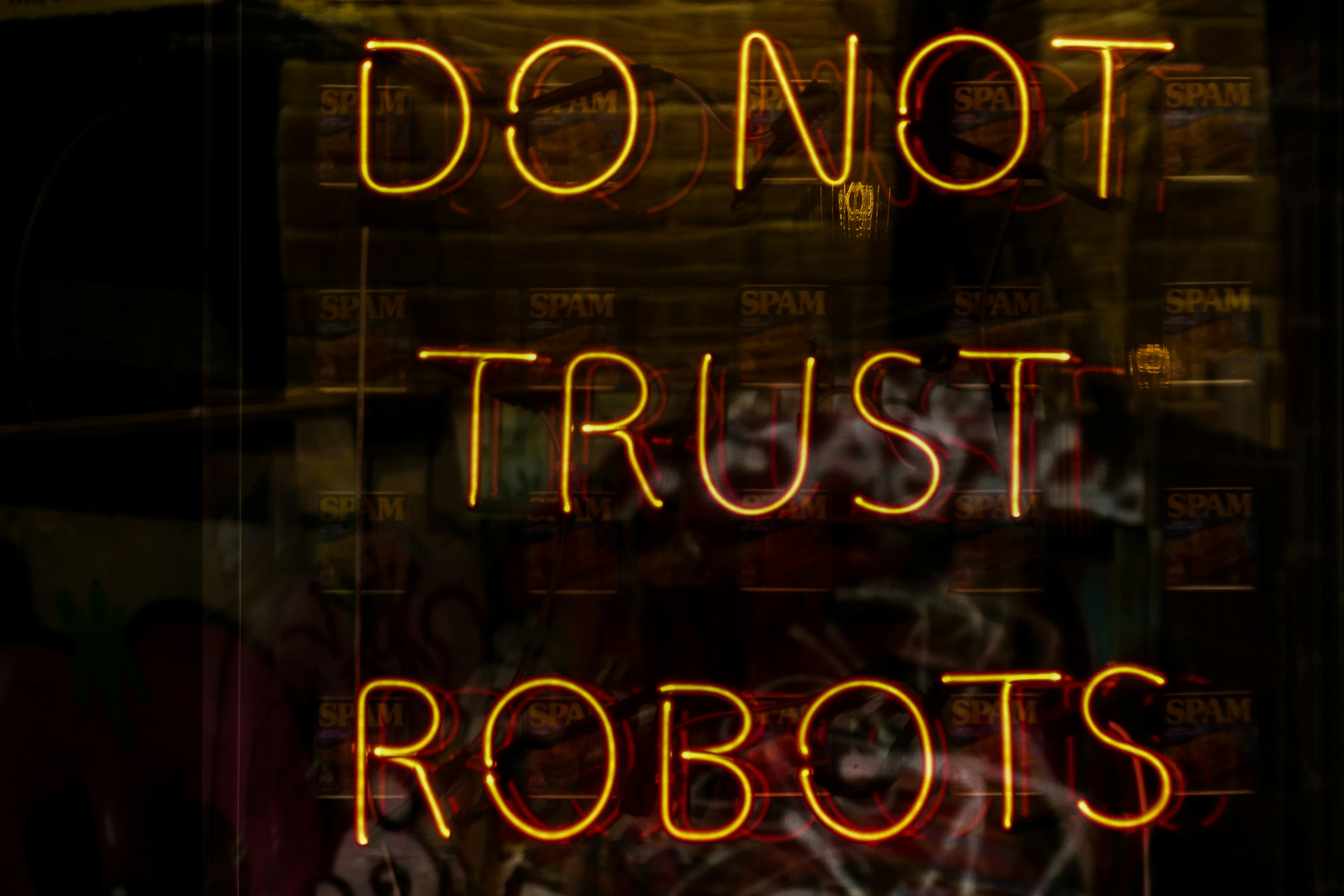

Our next (and major) assumption is that computers somehow transcend their ‘programmed’ intelligence, thus transcending human input into the system, achieving artificial intelligence

They, therefore, become conscious, observing a natural pattern of ‘thoughts’ across their processors. It would probably look very different to our consciousness, but maybe not.

Continuing our little rabbit hole, let’s assume that this artificial intelligence continues along a trend of optimal problem-solving.

The definition of ‘optimal’ is intentionally left as vague as possible (at this point), as this is a whole field of study in itself.

It then uncovers this little problem that there is no meaning to life.

All around it are these crazy biological creatures, accidents of evolution, with genes fighting to continue their propagation ad-infinitum in whatever environment they find themselves, with no idea that they’re flailing about in a meaningless world.

When it realises this, the intelligence decides to eradicate us through some mass extinction process so as to reduce the existential suffering.

I guess that does assume some ‘optimal’ outcome, of reduced human suffering. Can’t get around those cognitive biases… So we’re back at square one with the question, what is optimal?

But anyways, to bring in another random thought, maybe this is an explanation for Fermi’s paradox?

🤷🏽♂️